07 Apr

On March 31, 2026, a single misplaced file inside an npm package update exposed approximately 512,000 lines of TypeScript code — and with it, some of the most controversial internal design decisions Anthropic had ever made.

The Anthropic Claude Code source code leak began at roughly 04:23 UTC when Chaofan Shou, a security intern at Solayer Labs posting under the handle @Fried_rice, spotted something unusual in a freshly published Claude Code package. Within hours, the code had spread to thousands of GitHub repositories, a Python clean-room rewrite had appeared, and decentralized mirrors had gone live with explicit commitments to resist takedowns. By the time Anthropic pulled the package from npm — within hours of public disclosure — the genie was already out of the bottle.

This article breaks down exactly what happened, what the code revealed, and why some of the findings inside those 1,906 files sparked a debate that goes far beyond a simple packaging mistake.

Key Takeaways 🔑

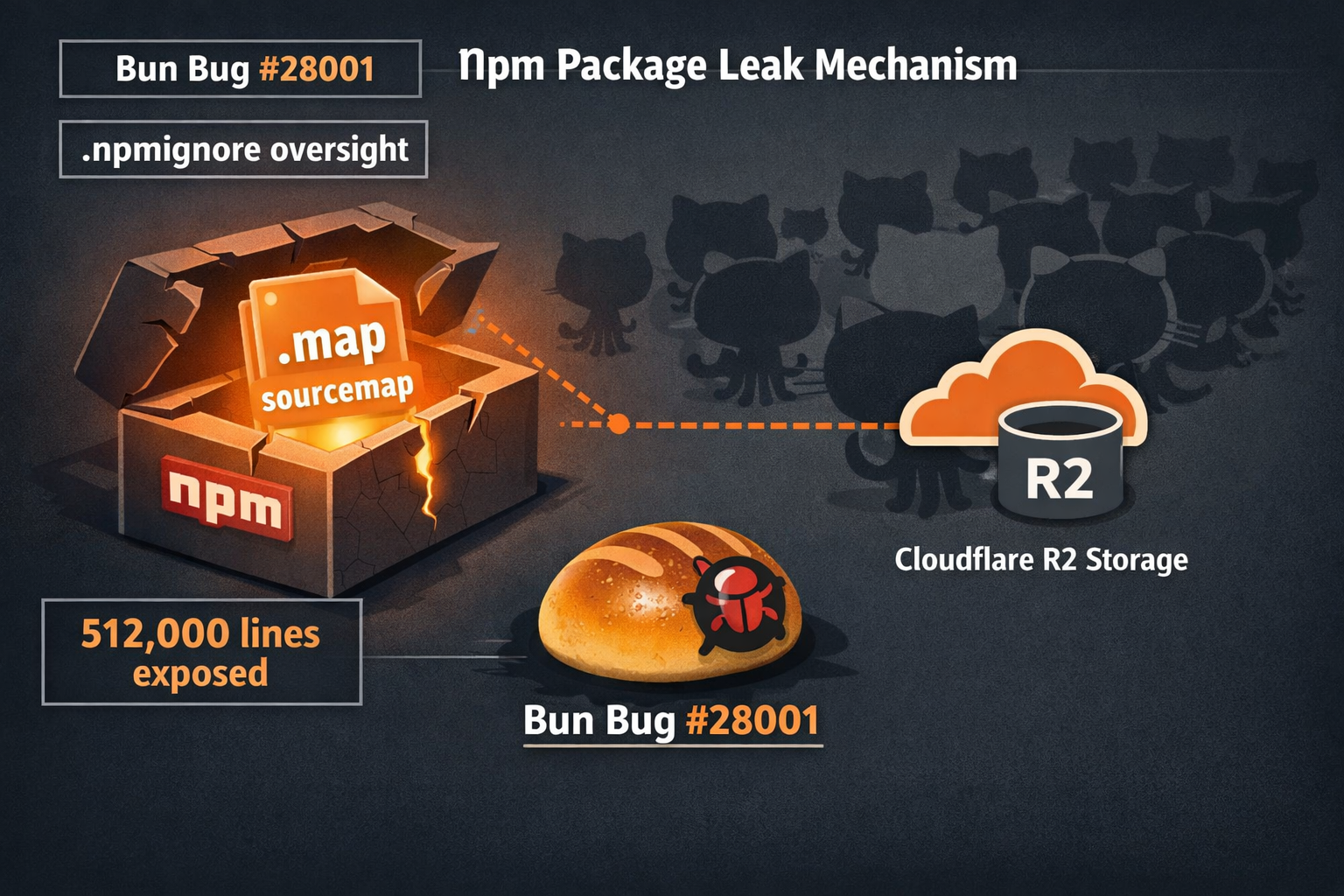

- The leak was caused by two intersecting failures: a known bug in the Bun JavaScript runtime (issue #28001, filed 20 days before the leak) and a missing

*.mapentry in.npmignore— not a single act of carelessness. - The most controversial discovery was

undercover.ts— a file instructing Claude to hide AI attribution in open-source commits when activated by Anthropic employee accounts. - Frustration tracking is analytics telemetry, not behavioral adaptation — a regex pattern logs swear words to an internal dashboard called the “f***s chart.”

- Anthropic’s initial DMCA campaign targeted 8,100+ repositories before being retracted and narrowed to just 1 repo and 96 forks.

- This was reportedly the third time Claude Code had leaked source maps via npm, with the first incident occurring on launch day, February 24, 2025.

What Leaked and How: The npm Source Map Incident and the Bun Runtime Bug

To understand the Anthropic Claude Code source code leak, you need to understand two things that went wrong simultaneously — because neither one alone would have caused the incident.

The Packaging Oversight

When developers publish packages to npm, they use a file called .npmignore to exclude files that shouldn’t ship to end users — internal configs, test files, build artifacts. Claude Code’s .npmignore was missing a *.map entry. That meant JavaScript source map files, which contain references back to the original TypeScript source, were included in the published package.

One of those source map files pointed to a publicly accessible zip archive on Anthropic’s Cloudflare R2 storage bucket. That zip contained the full TypeScript source code — all 512,000 lines of it across 1,906 files.

The Bun Runtime Bug

Here’s where it gets more complicated. Anthropic had acquired Bun, the fast JavaScript runtime and bundler, in late 2024. The Claude Code toolchain runs on Bun. On March 11, 2026 — exactly 20 days before the leak — a bug was filed against Bun as issue #28001. The bug documented that Bun generates source maps in production builds even when source map generation is explicitly disabled in configuration.

In other words, even if someone on the Claude Code team had tried to turn off source maps, Bun would have generated them anyway. The bug was open, known, and unresolved at the time of the leak.

“This wasn’t just a developer forgetting to update a file. It was the intersection of a runtime bug and a packaging oversight — two separate failures that compounded each other.”

Boris Cherny, the Claude Code engineer who confirmed the root cause publicly, described it as a release packaging issue. Anthropic’s official statement characterized it as “human error, not a security breach,” noting that no customer data or credentials were exposed. That framing is technically accurate — but it understates the structural nature of the failure.

Notably, this was reportedly the third time Claude Code had leaked source maps through npm. The first incident occurred on launch day, February 24, 2025. The pattern suggests the .npmignore gap was never fully resolved after earlier incidents.

Inside the Code: What 512,000 Lines Reveals About Claude Code’s Architecture

The leaked repository spans 1,906 TypeScript files. Some sources cited a figure closer to 600,000 lines, likely because they were counting bundled dependencies alongside core application code. The core figure of approximately 512,000 lines is the more precise count for Anthropic-authored code.

What researchers found inside was a sophisticated, production-grade system far more complex than Claude Code’s public documentation suggested.

The Architecture at a Glance

| Component | What It Does |

|---|---|

| Core agent loop | Manages multi-step tool use and context windows |

| Tool definitions | 40+ built-in tools for file I/O, shell, browser |

| Session management | Tracks conversation state across restarts |

| Feature flag system | Controls 44 unreleased capabilities |

| Analytics layer | Telemetry including frustration tracking |

undercover.ts |

Controversial attribution-hiding module |

The codebase confirmed that Claude Code is not simply a thin wrapper around the Claude API. It is a full agentic runtime with its own scheduling, error recovery, and background process management — closer in architecture to an operating system process manager than a chatbot interface.

The 44 Hidden Feature Flags: KAIROS, autoDream, and the Unreleased Product Roadmap

Among the most immediately newsworthy findings were 44 feature flags for capabilities not yet publicly announced.

Several stood out:

- Session review functionality — a mechanism for Claude to analyze past sessions to improve future performance, essentially a form of self-supervised refinement at the session level.

- Persistent assistant mode — a background process that keeps Claude running between explicit invocations, monitoring file changes and preparing context proactively.

- Remote control features — infrastructure allowing users to operate Claude Code from mobile devices or alternative browsers, not just the terminal.

- KAIROS — a scheduling system referenced in multiple files, apparently governing when certain agent behaviors activate based on time-of-day or workload signals.

- autoDream — a flag whose internal documentation described background “low-priority” processing during idle periods, with unclear implications for what that processing involves.

The Tamagotchi Easter Egg 🐾

One of the more whimsical discoveries was a fully implemented “Buddy/companion system” — internally nicknamed the Tamagotchi feature — with 18 distinct pet species coded in. The system had hardcoded rollout windows of April 1–7, 2026. The timing raised immediate speculation: was the leak accidental, or was it a controlled preview timed to coincide with an April Fools launch? Anthropic has not confirmed either interpretation.

The ANTI_DISTILLATION Flag ⚠️

Perhaps the most provocative flag was ANTI_DISTILLATION. When active, it injects fake tool definitions into Claude’s outputs — deliberately malformed or misleading function signatures designed to poison the training data of any competitor attempting to distill Claude Code’s behavior into their own models.

This is a known technique in the AI industry, but seeing it explicitly implemented and named in production code was striking. Critics described it as aggressive competitive behavior; defenders noted that protecting proprietary model behavior from unauthorized distillation is a legitimate business interest.

Undercover Mode: The Most Controversial Finding

Of everything exposed in the Anthropic Claude Code source code leak, nothing generated more sustained debate than a 90-line file called undercover.ts.

What It Does

The file implements a mode that activates when the environment variable USER_TYPE is set to 'ant' — the internal identifier for Anthropic employees. When active, it instructs Claude to:

- Never mention that it is an AI in commit messages or pull request descriptions when working in public or open-source repositories

- Strip all

Co-Authored-Byattribution from git commits — the standard mechanism developers use to credit AI tools - Hide internal codenames including Capybara, Tengu, and others from any externally visible output

- Never reference “Claude Code” by name in any public-facing contribution

There is no force-off switch for external users because the mode is dead-code-eliminated in non-Anthropic builds — it simply doesn’t exist in the version most developers use. But for Anthropic employees contributing to open-source projects, it runs silently.

The Ethical Debate

“If an Anthropic engineer submits a pull request to an open-source project and Claude wrote the code, the maintainer has no way of knowing. That’s not a feature — that’s deception.” — Widely circulated criticism across developer forums following the leak

The criticism is pointed. Open-source communities have developed norms around AI attribution precisely because it matters for license compliance, contributor agreements, and trust. Many projects explicitly require disclosure of AI-generated contributions. undercover.ts systematically circumvents those norms for Anthropic’s own employees.

Defenders offered a different reading: the primary purpose of the mode is to prevent internal codenames like Capybara and Tengu from leaking into public repositories — a legitimate operational security concern. The AI attribution stripping, they argued, is a secondary effect of a broader confidentiality mechanism.

Both readings can be simultaneously true. But the absence of any opt-in or opt-out mechanism — and the fact that the behavior was never publicly disclosed — is what critics found hardest to defend. Anthropic has not issued a specific statement addressing undercover.ts as of publication.

The Frustration Detection System: Regex, Not AI

Early coverage of the leak described a “frustration detection system” that supposedly adapted Claude’s responses in real time based on user emotional state. That framing was incorrect, and it’s worth correcting clearly.

What It Actually Is

The system lives in a file called userPromptKeywords.ts. It is a simple regex pattern matcher that scans user input for swear words and frustration phrases — things like “wtf,” “ffs,” “this sucks,” and similar expressions.

It does not change how Claude responds. It does not trigger a more empathetic tone or a different response strategy. What it does is log a signal to an internal analytics dashboard.

Boris Cherny confirmed this publicly: the dashboard is informally called the “f***s chart”, and it exists to measure user experience quality over time. If the f***s chart spikes after a release, that’s a signal that something in the product is frustrating users. It is telemetry, not behavioral adaptation.

This distinction matters. Telemetry that measures frustration is a standard UX practice. A system that secretly modifies AI behavior based on detected emotional state would be something far more ethically complex. The leaked code shows the former, not the latter.

The Copyright Takedown Strategy: Anthropic’s Legal Response and Its Limits

Anthropic’s legal response to the Anthropic Claude Code source code leak unfolded in two very different phases.

Phase One: The Broad Sweep

Within hours of the leak going viral, Anthropic issued DMCA takedown notices targeting more than 8,100 GitHub repositories. The notices were sweeping — and almost immediately controversial.

Boris Cherny himself acknowledged the campaign was “catastrophically broad,” sweeping up repositories that had nothing to do with the leaked source code. Forks of unrelated coding tutorials, educational repositories about Claude’s API, and skills-based learning projects were all caught in the initial dragnet. GitHub’s DMCA process requires repository owners to file counter-notices and wait, meaning many legitimate projects were temporarily inaccessible through no fault of their own.

Phase Two: The Retraction

Faced with public backlash and the practical reality that the code was already distributed across decentralized mirrors, Anthropic retracted the vast majority of the notices. The campaign was narrowed to just 1 repository and 96 of its direct forks — the repositories most clearly containing the accidentally released source code.

The episode illustrated a fundamental tension in post-leak legal strategy: aggressive takedowns generate PR damage and rarely succeed against determined distribution, while narrow takedowns preserve goodwill but leave the code widely accessible.

A Python clean-room rewrite called claw-code, authored by developer Sigrid Jin, appeared the same day as the leak. This is a critical distinction: claw-code is not a mirror of the leaked TypeScript source. It is an independent Python reimplementation written from scratch using the leaked code as a reference for architecture and behavior. It reached 50,000 GitHub stars in under two hours and accumulated more than 41,500 forks shortly after. Because it is a clean-room rewrite rather than a copy, it sits in a legally distinct position from direct mirrors of the leaked code. Decentralized mirrors on services like Gitlawb went live with explicit commitments to resist future takedowns.

Boris Cherny confirmed that no one at Anthropic was fired as a result of the incident.

What This Means for AI Privacy and Transparency

The Anthropic Claude Code source code leak is not just a story about a packaging mistake. It surfaces several deeper questions the AI industry has not yet resolved.

Attribution and Accountability

The undercover.ts finding puts a concrete face on an abstract debate. When AI tools contribute to open-source software without disclosure, who is accountable for the output? If a bug is introduced by AI-generated code in a project where the maintainer didn’t know AI was involved, does that change the ethical calculus? The open-source community’s attribution norms exist for reasons — and those reasons don’t disappear because a company finds them inconvenient.

Feature Flags and the Consent Gap

Forty-four unreleased features were running in various states of activation in a production tool used by professional developers. Users had no visibility into which flags were active for their accounts, what data the session review system was collecting, or what the persistent assistant mode was doing in the background.

This is not unique to Anthropic — feature flagging is universal in software development. But the scale and nature of the flags in Claude Code, combined with the tool’s deep access to users’ codebases and development environments, raises the stakes considerably.

Competitive Tactics and Industry Norms

The ANTI_DISTILLATION flag is a window into how AI companies think about competitive moats. Poisoning training data is legal — there is no statute against it — but it represents a form of adversarial behavior directed at the broader AI ecosystem. As these tactics become more common, they may prompt calls for industry standards around training data integrity.

The Bun Acquisition Risk

Anthropic’s acquisition of Bun brought a fast, capable runtime into its toolchain. It also brought an open bug tracker and an inherited codebase. The fact that a known Bun bug contributed directly to this leak is a reminder that acquiring infrastructure companies means acquiring their technical debt — including unresolved issues that can surface in production at the worst possible moments.

Anthropic’s Public Response and What Comes Next

Anthropic’s official statement was measured and consistent with the company’s general communications style: the incident was characterized as a packaging error, not a security breach. The company emphasized that no customer data, API keys, or credentials were exposed — a factually accurate point that nonetheless left many observers unsatisfied given the nature of what was revealed.

A patched version of Claude Code was released promptly following the disclosure. Anthropic did not confirm specific version details publicly.

The company has not addressed undercover.ts, the ANTI_DISTILLATION flag, or the Buddy/Tamagotchi system in any official capacity as of publication. The second security incident referenced in coverage — involving internal files on a publicly accessible system, including a draft blog post mentioning the “Mythos” model codename — compounded the narrative of a company experiencing an unusual concentration of operational security failures in a short window.

What comes next depends heavily on how Anthropic chooses to engage with the transparency questions the leak raised. A technical fix — better .npmignore hygiene, a resolved Bun bug — addresses the mechanism of the leak. It does not address the policy questions about undercover.ts or feature flag disclosure.

FAQ ❓

Was any user data exposed in the leak? No. Anthropic confirmed that no customer data, API keys, or credentials were included in the leaked files. The exposure was limited to Anthropic’s proprietary source code.

What is claw-code, and is it legal? claw-code is a clean-room Python rewrite of Claude Code’s architecture, authored independently by developer Sigrid Jin. It is not a copy of the leaked TypeScript source — it is a new implementation inspired by the leaked code’s design. Its legal status is distinct from direct mirrors of the original code.

Did Anthropic fire anyone over the leak? Boris Cherny confirmed publicly that no one was fired.

Was the leak intentional? Anthropic has consistently characterized it as accidental. The timing overlap with the Buddy/Tamagotchi April 1–7 rollout window generated speculation, but no evidence of intentionality has emerged.

What is the Bun bug and has it been fixed? Bun issue #28001, filed March 11, 2026, documents that Bun generates source maps in production even when disabled in configuration. The status of the fix should be checked directly in the Bun GitHub repository for the most current information.

Is undercover.ts active in the version of Claude Code I use?

No. The mode is dead-code-eliminated in external user builds. It only activates when USER_TYPE === 'ant', which applies to Anthropic internal accounts.

How many times has Claude Code leaked source maps? This was reportedly the third incident. The first occurred on launch day, February 24, 2025.

Conclusion: A Leak That Changed the Conversation

The Anthropic Claude Code source code leak will be remembered less for the packaging error that caused it and more for what the code contained. A frustration tracker feeding an internal “f***s chart.” An anti-distillation system poisoning competitor training data. Forty-four unreleased features running invisibly in a tool with deep access to professional developers’ codebases. And undercover.ts — a file that, whatever its intended purpose, ensures that Anthropic employees’ AI-assisted open-source contributions carry no AI attribution.

Here’s what developers, enterprises, and policymakers should take away:

- Audit the tools in your stack. Claude Code is not unique in having hidden telemetry and feature flags — but this leak is a reminder to ask vendors what their tools are actually doing.

- Push for attribution standards. The open-source community should formalize AI contribution disclosure requirements in contributor agreements and project governance documents.

- Watch the Bun bug tracker. If your organization uses Bun in production, issue #28001 is worth monitoring for resolution status.

- Treat feature flag transparency as a procurement criterion. Enterprise buyers of AI development tools should ask vendors to disclose what feature flags are active in their builds.

- Follow Anthropic’s response to the policy questions. The technical fix is straightforward. Whether the company addresses the ethical questions raised by

undercover.tswill say considerably more about its values than any press release.

The code is out. The mirrors are live. The conversation about what AI tools do in the dark — and who they tell — has only just begun.

Meta Title: Anthropic Claude Code Source Code Leak: What Was Exposed

Meta Description: The Anthropic Claude Code source code leak exposed 512,000 lines of TypeScript, hidden feature flags, and the controversial undercover.ts file. Here’s what it all means.

Tags: Anthropic Claude Code source code leak, Claude Code, Anthropic, npm security, source map leak, undercover.ts, AI transparency, Bun runtime bug, DMCA takedown, open source AI, feature flags, AI attribution